One of the main functions of drones is to collect data. Depending on the types of data the drone collects, intentionally or unintentionally, there must also be a consideration of how to secure that data and the ethics of collecting that data. For example, when we started amassing large amounts of personal data from people all over the world and that data was then exploited for information, both legally and illegally, laws like the GDPR were put into place to outline practices and behaviors for protecting personal data and making data collection and its use more transparent. These rules and regulations have significantly changed how companies handle and collect personal data. But with surveillance, companies are collecting different types of data altogether: where you go, what you look like, and what you do—what are the regulations and protocols for protecting that data, especially when collected by a drone?

Regulations haven’t been as defined for this kind of data acquisition. Although, most commercial drone operators aren’t interested in collecting this type of data—they are much more interested in finding cracks in wind turbines or collecting survey and mapping data—there is a segment of the commercial drone industry that could better serve the public by collecting data on crowds of people. However, this type of application is problematic because no one wants to think they are being watched.

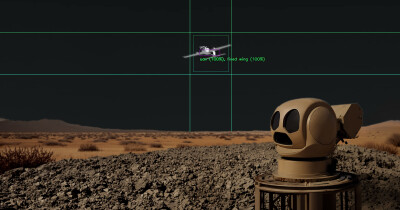

Drones can capture large amounts of visual data quickly, which can help law enforcement monitor potentially dangerous situations and keep the public safe. But for the reasons mentioned, getting approval to test and deploy projects like these has been a challenge. This is where machine vision and machine learning are stepping in to find solutions and enable this technology.

At one of Amsterdam Drone Week Hybrid 2020’s panel discussions, “AI in the Sky,” Aik van Eemeren, Lead Public Tech at City of Amsterdam; Ronald Westerhof, Manager Safety and Law at Municipality of Apeldoorn; Sander Klous, Professor Big Data Ecosystems for Business and Society at University of Amsterdam; Servaz van Berkum, Moderator at Pakhuis de Zwijger; and Theo van der Plas, Programme Director Digitalisation and Cybercrime at Dutch National Police discussed how AI could protect people from identification while still enabling law enforcement to detect bad actors or people in need of help.

The AI being tested in Amsterdam and Apeldoorn, with the collaboration of the City of Amsterdam’s AI team in conjunction with universities and the Dutch National Police, is capable of blurring out unnecessary information, like details of people, cars, or places, before it is stored, enabling police to detect, but not identify, these objects. Detection versus identification is the difference between seeing someone off in the distance and knowing it is a human but not being able to make out who they are and recognizing that person as your Aunt Hilda.

The AI protects individual’s identities while giving public safety another tool in their toolbelt to help with things like crowd counting, detecting bad actors, monitoring beaches for people who are drowning, and more. But there are still some challenges in the deployment of these technologies like communicating how these technologies work to the public and other key stakeholders.

People need to understand what a technology does, why it does it, and how it impacts themselves and their community. The panel pointed out that this isn’t a one-sized fits all approach, different stakeholders require different types of information depending on who and what they are responsible for. For instance, the public may only need to understand how it works as it was described in this article, but a public official may need the specific data points, cost analysis, algorithms, and more in order to support a proposal and implement it. Understanding what needs to be communicated and how it is best communicated is important.

One of the compelling methods that was discussed was live public demonstrations of the technology that allowed people to interact with and see how the technology worked. In this demonstration a camera equipped with the AI was set up in a public space to determine social distancing. When someone got too close a light would shine yellow then red as they got closer. When they moved apart, it would turn green. This interactive and simple communication method helped people understand how the technology worked. This gained the support they needed to continue development and planning for implementation.

But even with these steps, the panel reported that the process is slow, and for good reason—building trust in the safety and security of drone technology will take time. There are ethics involved in adopting technology. Every piece of technology impacts how society lives, and the panel took that very seriously. How this technology will shape their society was just as important as the potential of that technology, and they called for societies to individually discuss and outline how and where technology fits and doesn’t fit within their individual cultures.

At this point, AI in the sky will most-likely be deployed first for life-saving missions with other applications following as it fits within the needs of society. But it is difficult to ignore that the technology being developed in Amsterdam today has a broad application and may one day be an integral part in how the industry builds public trust. If a drone flies over a public place or even your property, but you know it is equipped with this technology—the fear of being spied on may be lessened and that has far reaching implications beyond public safety.

To check this out on demand or learn more about the drone industry check out Amsterdam Drone Week's Hybrid Event by clicking here.

Comments